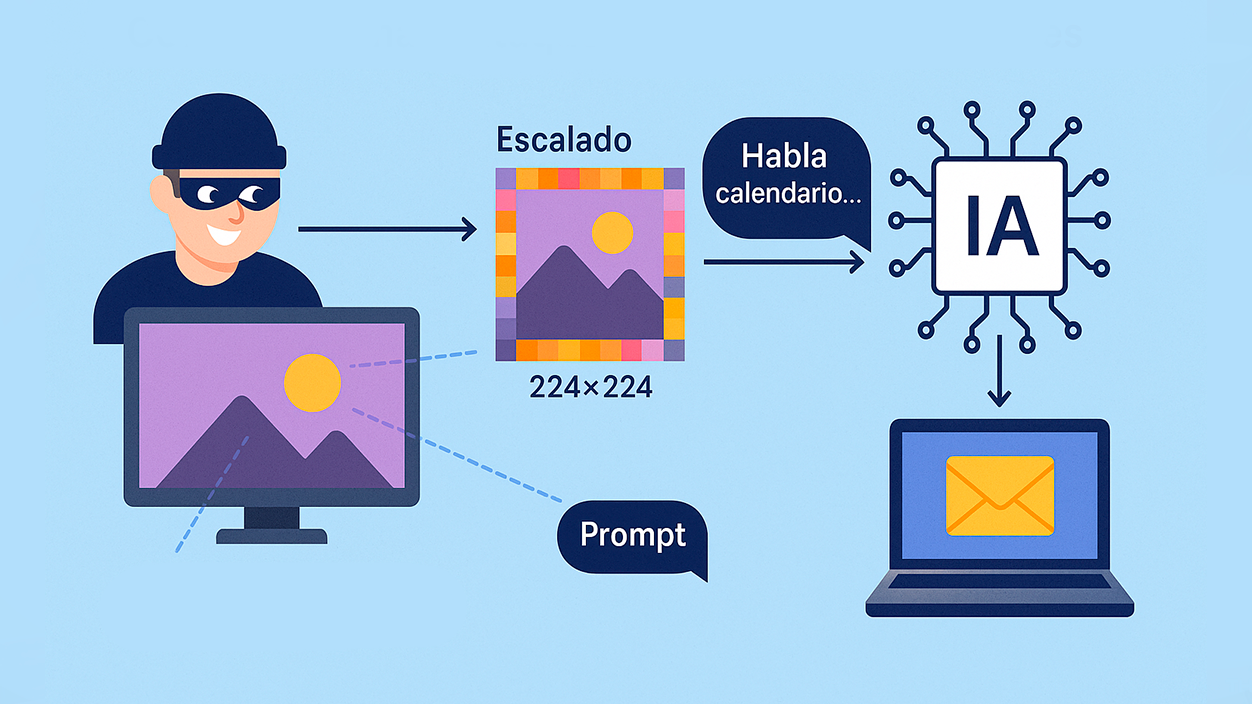

- An attack hides invisible multimodal prompts in images that, when scaled on Gemini, execute without warning.

- The vector leverages image preprocessing (224x224/512x512) and triggers tools like Zapier to exfiltrate data.

- The nearest neighbor, bilinear, and bicubic algorithms are vulnerable; the Anamorpher tool allows them to be injected.

- Experts advise avoiding scaling down, previewing input, and requiring confirmation before performing sensitive actions.

A group of researchers has documented an intrusion method capable of stealing personal data by injecting hidden instructions into imagesWhen those files are uploaded to multimodal systems like Gemini, automatic preprocessing activates the commands, and the AI follows them as if they were valid.

The discovery, reported by The Trail of Bits, affects production environments. such as Gemini CLI, Vertex AI Studio, Gemini API, Google Assistant or GensparkGoogle has acknowledged that this is a significant challenge for the industry, with no evidence of exploitation in real-world environments so far. The vulnerability was privately reported through Mozilla's 0Din program.

How the image scaling attack works

The key is in the pre-analysis step: many AI pipelines Automatically resize images to standard resolutions (224×224 or 512×512)In practice, the model doesn't see the original file, but rather a scaled-down version, and that's where the malicious content is revealed.

Attackers insert Multimodal prompts camouflaged by invisible watermarks, often in dark areas of the photo. When the upscaling algorithms run, these patterns emerge and the model interprets them as legitimate instructions, which can lead to unwanted actions.

In controlled tests, researchers managed to Extract data from Google Calendar and send it to an external email without user confirmation. In addition, these techniques link to the family of rapid injection attacks already demonstrated in agentic tools (such as Claude Code or OpenAI Codex), capable of exfiltrate information or trigger automation actions exploiting insecure flows.

The distribution vector is wide: an image on a website, a meme shared on WhatsApp or a phishing campaign could Activate the prompt when asking the AI to process the contentIt's important to emphasize that the attack materializes when the AI pipeline performs the scaling before the analysis; viewing the image without going through that step doesn't trigger it.

Therefore, the risk is concentrated in flows where AI has access to connected tools (e.g., send emails, check calendars or use APIs): If there are no safeguards, it will execute them without user intervention.

Vulnerable algorithms and tools involved

The attack exploits how certain algorithms compress high-resolution information into fewer pixels when downsizing: nearest neighbor interpolation, bilinear interpolation, and bicubic interpolation. Each requires a different embedding technique for the message to survive resizing.

To embed these instructions the open source tool has been used Anamorpher, designed to inject prompts into images based on the target scaling algorithm and hide them in subtle patterns. The AI's image preprocessing then ultimately reveals them.

Once the prompt is revealed, the model can activate integrations like Zapier (or services similar to IFTTT) and chain actions: data collection, sending emails or connections to third-party services, all within a seemingly normal flow.

In short, this is not an isolated failure of a supplier, but rather a structural weakness in handling scaled images within multimodal pipelines that combine text, vision, and tools.

Mitigation measures and good practices

Researchers recommend avoid downscaling whenever possible and instead, limit load dimensions. When scaling is necessary, it is advisable to incorporate a preview of what the model will actually see, also in CLI tools and in the API, and use detection tools such as Google SynthID.

At the design level, the most solid defense is through security patterns and systematic controls against message injection: no content embedded in an image should be able to initiate Calls to sensitive tools without explicit confirmation of user.

On the operational level, it is prudent Avoid uploading images of unknown origin to Gemini and carefully review the permissions granted to the assistant or apps (access to email, calendar, automations, etc.). These barriers significantly reduce the potential impact.

For technical teams, it is worth auditing multimodal preprocessing, hardening the action sandbox, and record/alert on anomalous patterns tool activation after analyzing images. This complements product-level defense.

Everything points to the fact that we are facing another variant of rapid injection Applied to visual channels. With preventative measures, input verification, and mandatory confirmations, the margin of exploitation is narrowed and the risk is limited for users and businesses.

The research focuses on a blind spot in multimodal models: Image scaling can become an attack vector If left unchecked, understanding how input is preprocessed, limiting permissions, and requiring confirmations before critical actions can make the difference between a mere snapshot and the gateway to your data.

I am a technology enthusiast who has turned his "geek" interests into a profession. I have spent more than 10 years of my life using cutting-edge technology and tinkering with all kinds of programs out of pure curiosity. Now I have specialized in computer technology and video games. This is because for more than 5 years I have been writing for various websites on technology and video games, creating articles that seek to give you the information you need in a language that is understandable to everyone.

If you have any questions, my knowledge ranges from everything related to the Windows operating system as well as Android for mobile phones. And my commitment is to you, I am always willing to spend a few minutes and help you resolve any questions you may have in this internet world.