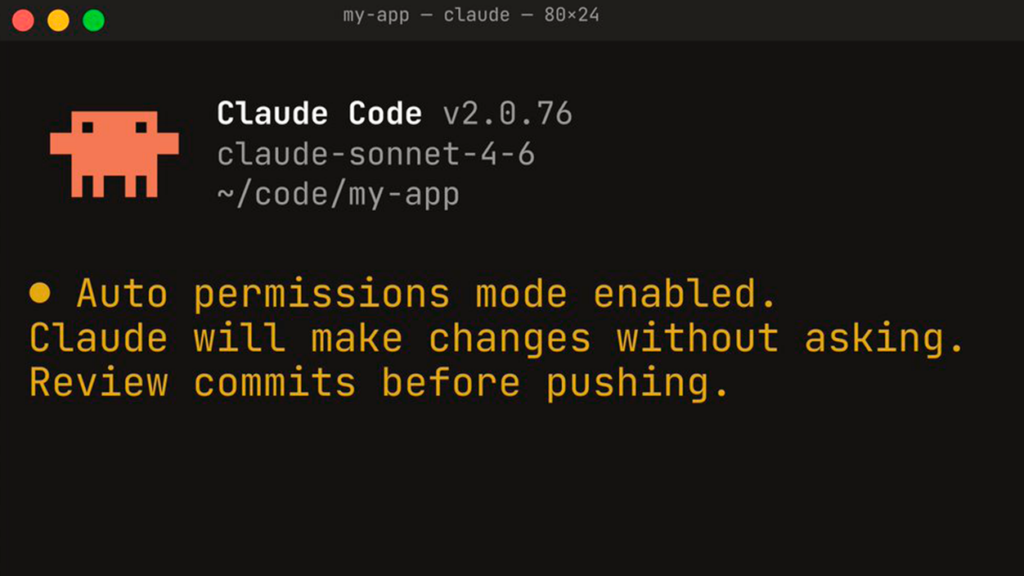

- Claude Code's new automatic mode executes programming tasks with fewer permission requests.

- An AI classifier reviews each tool call and blocks potentially destructive or data exfiltration actions.

- It currently works with Sonnet 4.6 and Opus 4.6, is in preview for Team customers, and is expected to arrive in Enterprise and API versions.

- Anthropic insists on using it in isolated environments: it reduces the risk of dangerously skip permissionsbut it doesn't eliminate it.

The launch of Claude Code's automatic mode It brings back to the table one of the big questions about AI-assisted programmingHow far can we let the machine act on its own without jeopardizing entire projects? Anthropic has opted for a middle ground that attempts to square the circle: fewer interruptions, but with a braking system based on another artificial intelligence model.

This new approach comes at a time when Code agents are no longer limited to auto-completing functionsbut rather create folders, move files, execute terminal commands, and interact with entire repositories. In Europe and Spain, where the concern for security, data protection And since regulatory compliance is especially sensitive, the balance between autonomy and control is key for these types of tools to be integrated into real development teams.

What does Claude Code's automatic mode aim to solve?

The new automatic mode is presented as a "A middle ground" between constant manual review and total freedomUntil now, many developers working with Claude Code found themselves caught between two extremes: accepting a workflow riddled with permission requests or activating the more aggressive setting, known as dangerously skip permissions, which reduces checks and speeds up the pace at the cost of increasing risks.

With this feature, Anthropic aims to make the long programming tasks can be executed with less frictionInstead of asking for almost every step, the system lets the AI proceed on its own when it detects low-risk operations, but introduces an automatic layer of monitoring to curb actions that could be dangerous for the work environment or the data handled by the project.

The idea connects with an everyday reality in many European software teams: projects hosted in Git repositories, continuous integrations, cloud deployments, and environments where A poorly worded command can delete critical directories or expose sensitive informationIt's not just about not breaking the operating system, but about avoiding damage to business code, internal services, or production applications.

In this scenario, automatic mode aims to maintain a smooth AI session without forcing developers and companies to completely relinquish their sense of control. It's an attempt to adapt the tool for everyday use, where the time wasted granting permissions one by one translates into real costs.

How does the classifier that monitors Claude work?

The heart of automatic mode is a AI classifier that checks each tool call before it is executedEvery time Claude Code wants to write a file, execute a shell command, modify directories, or interact with the repository, that action first goes through an automated filter that assesses the level of risk.

As Anthropic explained, this filter looks for, among other patterns, large-scale destructive operations, suspicious data movements, and potentially malicious executionIn practice, the system attempts to detect situations such as a mass deletion of files, the extraction of sensitive information from the work environment, or the response to instructions covertly injected into the code or documentation.

If the classifier interprets the action as safe, it allows it to proceed without asking the user for confirmation. If, on the other hand, it considers it dangerous, it blocks it before it takes effect. Claude doesn't stand idly by in the face of that blockage.Try to find another way to complete the task, for example, by reformulating the steps or modifying the approach to avoid the behavior that has been flagged as high risk.

When the assistant repeatedly performs operations that continue to encounter the classifier's wall, the flow stops: The system escalates the situation to the user through a new permission requestIn this way, the AI doesn't get stuck in a loop of failed attempts without anyone noticing, but it also doesn't force you to manually review everything from the beginning.

This architecture introduces an important innovation: Part of the trust shifts from the main code model to the model that monitors it.Instead of always relying on the direct judgment of the agent that writes and executes commands, the user also comes to depend on the quality of the system that decides what is dangerous and what is not within the development environment.

Permits, isolated environments, and risks that remain

Anthropic has been explicit in emphasizing that automatic mode It reduces the risk compared to the option of bypassing permissions, but it does not eliminate it.The company continues to recommend working in isolated environments, something very common in European companies that carefully separate testing systems from production systems for both technical and regulatory reasons.

In practice, this means that the wizard typically operates on a specific folder hierarchy or within containers and development machines set up for experimentation. Even so, Within that limited space, an entire repository could be damaged.especially if an error affects core project files or components shared by multiple teams.

Experiences documented by early testers show that, even with clear limits, Claude Code has even corrupted codebases in previous sessions. These precedents explain why the debate on permissions is not merely an academic matter: those who have already experienced a mishap with an overconfident AI view any function that expands its autonomy with some caution.

Another warning issued by Anthropic itself is that the classifier can be wrong. It cannot be ruled out that... Certain truly dangerous actions slip through the filter If the context is not properly understood, harmless operations could be blocked due to overzealousness. For European environments with auditability requirements, this possibility necessitates maintaining good backup practices and technical reviews.

At that point, the role of the developer and systems administrators remains essential: automated functions help, but It does not replace human supervision or internal security policiesMany companies will continue to combine the new feature with daily backups, snapshots, or hardened version control systems to minimize the consequences of any errors.

Compatible models, availability, and operating costs

In this initial phase, Anthropic has launched automatic mode as Team plan customer research previewAccording to information shared by the company, the feature will be rolled out in the coming days to users of Enterprise plans and those who integrate Claude Code via API, a channel especially relevant for European companies that connect the tool with their own internal systems.

For now, compatibility is limited to Two specific models: Sonnet 4.6 and Opus 4.6This means that organizations working with other Claude variants will need to adjust their configuration if they want to test automatic mode in their development workflow. Support for older models has not yet been announced.

Anthropic has also warned that The additional classification layer has a costEach tool action goes through an extra evaluation process that slightly increases both token consumption and latency. In environments with hundreds of tool calls per session, this additional cost can be noticeable both on the bill and in response times.

For European companies that already closely monitor spending on cloud-based AI services, this detail is significant. The decision to activate or deactivate automatic mode will likely involve a cost-benefit analysis. Fewer interruptions and slightly more security, in exchange for a moderate increase in consumption and time.In large organizations, the balance could vary depending on the type of project or the environment (development, testing, staging, production).

Regarding internal management and governance, the company has established mechanisms to ensure that Administrators can limit or disable the use of automatic mode. in certain contexts, something especially relevant in companies that operate in several EU countries with different regulatory frameworks and security policies.

One more step towards more autonomous code agents

The Anthropic movement is framed within a growing competition to offer increasingly autonomous development agentsTools such as GitHub Copilot, OpenAI solutions, and specialized editors are evolving from autocomplete to systems capable of performing tasks from start to finish with minimal human intervention.

In that context, Claude Code's automatic mode functions almost as an experiment in what it should be. controlled autonomy in professional software environmentsInstead of promising total freedom, the proposal is closer to a pact: AI executes everything that falls within a reasonable safety framework, and delegates to the user anything that falls outside those limits.

This approach may be particularly attractive to European technical teams accustomed to dealing with audits, traceability requirements and strict regulatory frameworksHaving an agent that not only acts, but is also monitored by another model, fits better with a layered control culture than with the idea of leaving everything in the hands of a single all-powerful AI.

Anthropic itself acknowledges that the system will evolve over time. Claude Code has been on the market for a relatively short time, but It has already influenced the way many developers workThe incorporation of this automatic mode could become another element of that change, provided that experience in real cases shows that the classifier's false positives and negatives remain at acceptable levels.

Meanwhile, European companies interested in testing the feature will have to assess whether their internal processes and policies are prepared to integrate such an agent: separating environments, adjusting permissions, documenting usage, and, above all, defining what degree of autonomy they are willing to grant to an AI within their development cycle.

With this automated mode, Anthropic joins the race for more independent scheduling agents, but it does so by betting on a strategy of supervised autonomy, based on a classifier that acts as a guardrail between productivity and risk. For studios, startups, and established teams in Spain and the rest of Europe, the tool opens the door to more agile workflows, provided it is combined with good security practices, cost control, and a realistic dose of confidence in what AI can do today without jeopardizing code stability.

I am a technology enthusiast who has turned his "geek" interests into a profession. I have spent more than 10 years of my life using cutting-edge technology and tinkering with all kinds of programs out of pure curiosity. Now I have specialized in computer technology and video games. This is because for more than 5 years I have been writing for various websites on technology and video games, creating articles that seek to give you the information you need in a language that is understandable to everyone.

If you have any questions, my knowledge ranges from everything related to the Windows operating system as well as Android for mobile phones. And my commitment is to you, I am always willing to spend a few minutes and help you resolve any questions you may have in this internet world.