- Configuring Moltbot in isolated environments (VM, Docker, NAS) drastically reduces the impact of failures or attacks.

- Gateway security is key: limiting the network, using VPNs, robust tokens, and regular audits prevents unauthorized access.

- Connecting Moltbot to “openai-compatible” API proxies allows the use of multiple models (Claude, GPT, Gemini) at a lower cost.

- Good model management, fallback strategies, and usage limits make Moltbot useful and sustainable over time.

Moltbot has become one of the most striking AI agents of the momentIt can control your computer, talk to you via WhatsApp or Telegram, and automate a multitude of daily tasks. But along with all that potential come significant risks if you don't configure it carefully, especially regarding security and how it connects to AI model APIs.

In this guide you will find a complete explanation about what is Moltbothow to use it and how to keep it well protectedIn addition to the best way to connect it to model providers using OpenAI-compatible API intermediaries, you'll see everything from basic examples to advanced configurations. config.jsonYAML, environment variables, commands and best practices to avoid leaving your machine vulnerable to just anyone.

What is Moltbot and how does it differ from a ChatGPT-type chat?

Moltbot (formerly known as Clawdbot) is a self-hosted AI personal assistant It runs locally on your machine or server and connects to multiple messaging platforms via a WebSocket-based gateway. It's not just a chat application, but an agent capable of acting independently within your system.

Unlike a classic chat like ChatGPT, which is reactive (you enter the website, ask a question and that's it), Moltbot works in a much more proactiveIt can send you messages via WhatsApp or Telegram, monitor services, check your email or calendar, and make decisions based on your instructions and the context it gathers.

This agent relies on external AI models (Claude, GPT, Gemini, etc.)Moltbot accesses these APIs through official APIs or via OpenAI-compatible proxies. Moltbot isn't "AI" itself; it's the orchestration and automation layer that connects these APIs to your operating system, accounts, and apps.

All of this runs on your own infrastructure: from a desktop PC, a dedicated server, a VPS, a Raspberry Pi, or even a QNAP NAS if you combine it with an Ubuntu desktop within Linux Station. This gives you flexibility, but it also places the responsibility for configuring it securely on you.

What Moltbot can do on your computer and services

When you install Moltbot and give it full permissions, it becomes an “assistant superuser” It can touch almost everything on the machine it's running on. That's its great advantage for automation, and also the main source of danger if something goes wrong.

Among the most relevant capabilities Moltbot on a desktop or server includes:

- Full access to the system through the shell, being able to execute commands, scripts or scheduled tasks.

- Browser control with your open sessionsThis allows you to operate on websites where you are already authenticated.

- Reading and writing to the file system, with the ability to create, modify or delete documents and folders.

- Access to email, calendar, and other connected services, provided you link them explicitly.

- Persistent memory between sessionspreserving context, history, and relevant data from your interactions.

- Proactive message sending through the messaging apps you configure, without you having to always start the conversation.

- Integration with dozens of applications and platformsWhatsApp, Telegram, Discord, Slack, Signal, iMessage, Gmail, GitHub, Spotify, Hue, Obsidian, Notion, Trello, Nextcloud, Home Assistant, 1Password, home automation services, cameras, and many more.

It is also capable of downloading and installing software, browsing websites, and compiling summaries.Generate documents, update your calendar, manage tasks in Trello or Notion, and control smart home devices based on rules you define. The more access you give your services, the more things they can do for you… but the greater the impact of a failure or attack will also be.

Risks and security issues when using Moltbot

Giving Moltbot full access to your computer is like giving the keys to your house to a very clever assistant, who can make mistakes or be manipulated if not properly protected. Even the developers and experts themselves acknowledge that there is no such thing as a "perfectly secure" setup.

Among the major dangers to your data and privacy They stand out:

- Exposing the Gateway to the Internet without adequate protection, leaving the administration panel and port 18789 accessible to anyone if you open it to the outside.

- Overly permissive access policiesthat allow other people or bots to interact with your agent as if it were you.

- Prompt injection attacks, hidden in documents, websites or messages that Moltbot processes and that alter its original instructions.

- Malicious plugins or extensions loaded alongside the Gateway, capable of executing unwanted code.

- Misinterpretations or “hallucinations” of the AI model, that lead the agent to delete, overwrite or send sensitive information without that being your real intention.

The situation worsens if you expose the control panel without filters.There are hundreds of instances detected on Shodan listening on the default port, which implies that an attacker could gain control of the assistant and, by extension, all the accounts and services integrated into it.

One particularly delicate point is the injection of prompts.If Moltbot analyzes PDFs, HTML emails, or web pages, these may include hidden instructions (in signatures, invisible HTML code, quotes, or conditions) telling it to ignore your commands and perform actions contrary to your interests, such as exfiltrating data or disabling security controls.

Basic best practices: how to use Moltbot wisely

The first recommendation from all the experts is crystal clear: Do not install Moltbot on your main computer with all your sensitive dataIdeally, it should be erected in an isolated environment where potential damage is very limited.

Highly recommended options for a safer deployment are:

- Dedicated virtual machine based on Linuxwithout direct access to your local network or other critical services.

- Well-isolated Docker containerwithout sharing volumes with other apps or exposing unnecessary ports.

- Secondary desktop or laptop computer not the one you use for critical work or highly private information.

In these environments, you can decide what data Moltbot sees, what passwords are saved in the browser, which email accounts are linked, and which applications are integrated. You don't need to give it access to your entire digital world from the very beginning.

In addition, it is advisable limit as much as possible the types of files and sources with which it interacts the agent. If you want to reduce the risk of prompt injection, try to have it process only documents created by you or from highly trusted sources, and define clear validation policies before executing critical commands.

Security recommendations for the Gateway and remote access

The nerve center of Moltbot is its web administration gatewaywhich can only work in localhost or listen on all network interfaces to allow remote access. This is where many users mess things up by opening it directly to the internet without filters.

To minimize risks, there are several lines of defense you should implement. according to your level of demand:

1. Limit network reach and use a firewall

Most likely, the Gateway is only listening in “loopback” mode (127.0.0.1)so that it can only be accessed from the machine where it is installed. This is the most recommended default configuration for many personal use cases.

If you choose remote access, you need to strengthen access with a firewall.In Linux you can use iptables to allow only specific IPs to the Gateway port (18789 by default) and drop all other connections:

sudo iptables -A INPUT -p tcp --dport 18789 -s IP_elegida -j ACCEPT

sudo iptables -A INPUT -p tcp --dport 18789 -j DROP

It is important to apply the rules in the correct order and save them. (for example, with iptables-save) so that they persist after a reboot. This ensures that only a few trusted machines can access the panel.

2. VPNs, virtual private networks and secure tunnels

If you need to access the Gateway from outside your home or officeThe cleanest way is to first connect to your network using an encrypted and authenticated VPN, and treat the Moltbot panel as if it were only on the intranet.

You can set up OpenVPN or WireGuard servers. in your infrastructure, or you can use ZeroTier or Tailscale solutions that create virtual private networks without needing to open ports on the router. This way you access the Gateway as if it were a local service, but securely traversing the Internet.

Another alternative is to use Cloudflare Tunnels with Zero-Trust authenticationAlternatively, you could deploy a reverse proxy like Traefik combined with an additional authentication system such as Authelia. The idea is that no one can access the web panel without first passing through several layers of verification.

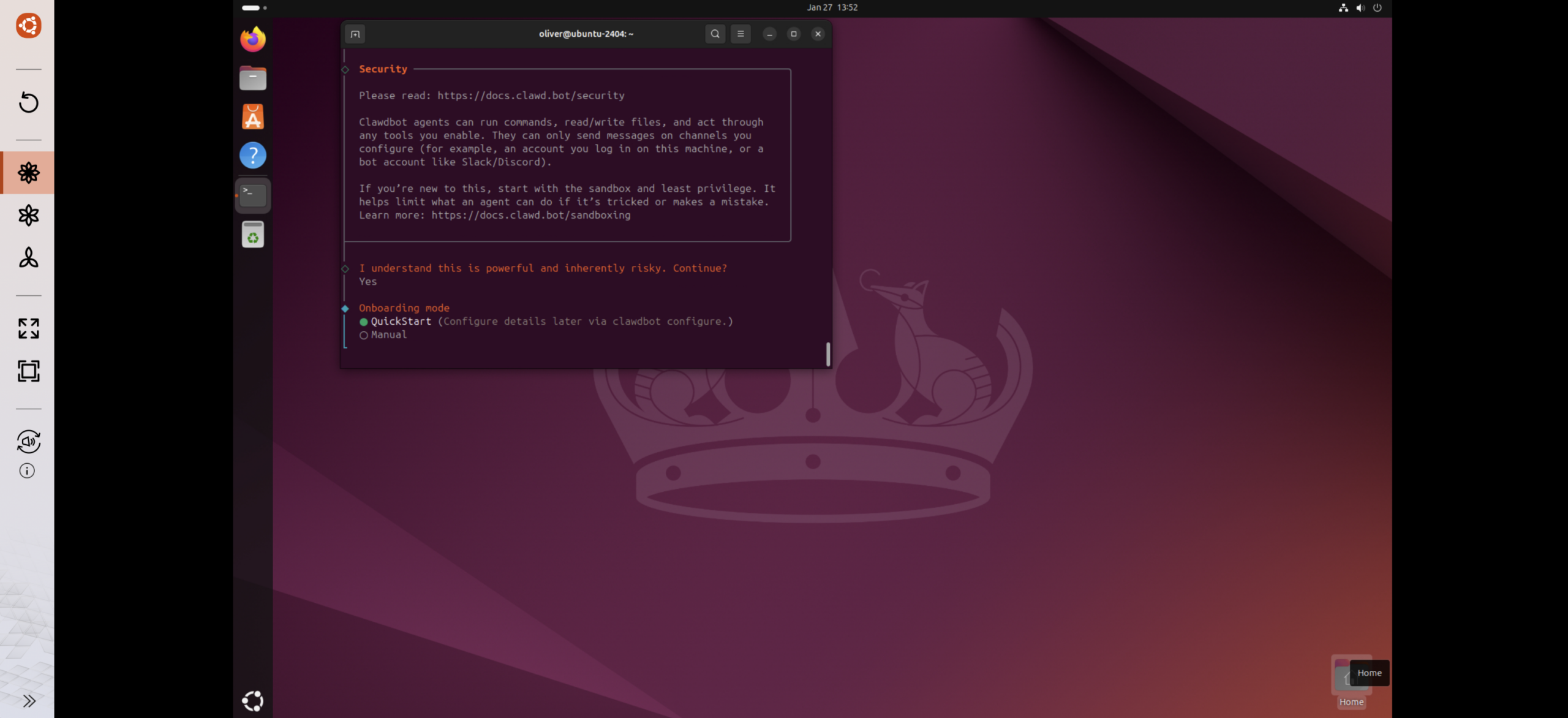

3. Robust token authentication

Even if you're only using Moltbot locally, enabling strong authentication is highly recommended.You can generate an access token for the Gateway by running a diagnostic utility from the project itself (historically accessible with commands like clawdbot doctor --generate-gateway-token or equivalents under the new name).

That token will be stored in the agent's configuration files. and you will have to enter it when you access the web interface, either from https://localhost:18789 or through a protected public domain.

4. Granular control of access and messaging accounts

If you're going to use a Telegram bot as your main interface, configure it with maximum privacy.Create the bot with BotFather, obtain the token, and add it only to the Moltbot settings, without sharing it with anyone else.

In BotFather's settings you can restrict the bot's use To prevent it from being added to groups, to ensure group privacy is enabled, and to disable administrator privileges. Within Moltbot, limit which users or chat IDs are authorized and prevent sharing the bot with people you don't fully trust.

5. Audits and activity monitoring

A major advantage of Moltbot is that it records much of its internal activity.The agent's sessions and actions are stored in paths such as ~/.clawdbot/agents/main/sessions (or equivalents under the new structure), where you can check what has been done on your behalf.

In addition, the agent itself includes security auditing utilities.capable of reviewing your settings and warning you of dangerous configurations. Commands such as clawdbot security audit, its in-depth version --deep or even a --fix They can help you detect and, if you wish, correct problems, although it's always best to manually review the proposed changes.

Install and use Moltbot on a QNAP NAS with Ubuntu Linux Station

A very practical way to have Moltbot running 24/7 without taking up your main computer It involves hosting it on a QNAP NAS compatible with Ubuntu Linux Station, setting up a dedicated Ubuntu desktop environment there.

The typical scenario is to deploy Ubuntu 24.04 (or another supported version) Within Linux Station, access the desktop via VNC and manage the system as if it were an independent PC, but with the storage and availability of the NAS.

The general process for setting it up is this:

- Log in to QTS and open the App Center from QNAP.

- Search and install Ubuntu Linux Station from the application catalog.

- Launch Ubuntu Linux Station and choose the version of Ubuntu to download (for example, 24.04).

- Wait for the image to download and install automatically.

- Once completed, use the VNC link displayed by Linux Station to open the remote desktop.

- Log into Ubuntu using your NAS account credentials.

- Open a terminal and run the official Moltbot installation command provided on their website.

- Follow the on-screen instructions to complete the initial agent setup.

When you're finished, you'll have Moltbot running inside that "encapsulated" Ubuntu on the NAS.It's isolated from your everyday PC but available 24/7. If you want other devices on your network to access its web interface, you can add a virtual network adapter in Linux Station so that Ubuntu gets a separate IP address and you can use that address to connect from outside.

Model configuration and use of API intermediate stations

By default, Moltbot is oriented to the official Anthropic API (Claude)However, this route has drawbacks: high prices, potential regional restrictions, and a limited number of available models. Therefore, many users prefer to configure the agent to use an OpenAI-compatible API proxy.

Moltbot's "openai-compatible" mode allows you to connect to third-party services These expose an endpoint using the same protocol as the OpenAI API. Through an intermediate station of this type, you can access models from Claude, GPT, Gemini, and others, typically at significantly lower rates and with greater stability thanks to load balancing.

The key aspects of this type of configuration revolve around several concepts:

- “openai-compatible” provider that accepts OpenAI-style calls (completions or responses).

- Custom baseUrl where to send the requests, for example

https://api.apiyi.com/v1. - Managing multiple models from the same provider: Claude Sonnet, Claude Opus, GPT-4o, Gemini, etc.

- Cost optimization with discounts of 40-60% compared to the official prices of some models.

- Greater stability and less rate limiting thanks to the backend of the intermediate station.

Preparations: Installing Moltbot and obtaining the proxy API key

Before touching configuration files, make sure Moltbot is properly installed and up to dateIn many Node.js environments, it is enough to check the version from the command line and, if missing, install the latest stable release.

Typical system requirements include Node.js 22 or higherDisk space for the agent's configuration and memory, and sufficient CPU/RAM resources depending on the tools and plugins you plan to use. For a shared cluster, something close to 2 vCPUs and 2 GB of RAM is generally recommended as a minimum.

In parallel, you will need to obtain an API Key from the proxy service of your choice.For example, a platform that offers access to Claude, GPT, and Gemini models through an OpenAI-compatible endpoint. Upon signing up, you will receive a private key (type sk-xxxxxxxx), the base URL of the API and the list of supported models.

The configuration elements you should note are basically three:

- API Key: the secret key that identifies your account to the proxy.

- Base URL: For example

https://api.apiyi.com/v1It is important to include the suffix/v1. - Model name(s): identifiers such as

claude-sonnet-4-20250514,gpt-4oogemini-2.0-flash.

Method 1: Permanent configuration using config.json

The most robust way to configure the model provider in Moltbot is to edit your file config.jsonThis is where the "providers" and the main agent model are defined. It's a persistent configuration: you create it once and it's maintained across future reboots.

Depending on the operating system, the configuration file is usually located in paths such as:

- macOS and Linux:

~/.clawdbot/config.jsono~/.moltbot/config.json, according to the migration phase. - Windows:

%USERPROFILE%\.clawdbot\config.json(and equivalent under the new name when applicable).

From Moltbot's own command line you can list the current configuration and see the exact file path. Once located, you just need to add a block models.providers defining the proxy, its models, and the primary model that the agent will use by default.

This block specifies key parameters such as baseUrl, apiKey, the type of API (openai-completions o openai-responses), if the standard authorization header and the model list with its context windows are used (contextWindow) and maxTokens exit.

Method 2: Use config.yaml instead of JSON

If you prefer a more readable format, Moltbot also allows you to configure the agent with YAML.using a file config.yaml in the same configuration directory. The semantics are similar, only the syntax changes.

In YAML you define the section models: providers: with the same type of information: base URL, API Key, API type, model list and block agent: where you specify which model is the primary one and which are the backups. It's a more convenient alternative if you tend to keep complex configurations handy.

There is even a minimal YAML configuration version where you only specify a model, the supplier openai-compatiblethe base URL and the key. This is useful for quick tests, although in serious environments it's important to define several models and strategies for fallback.

Method 3: Environment variables and the .env file

When you want to do temporary tests or integrations in CI/CDUsing environment variables is much more practical than modifying persistent configuration files. Moltbot understands several predefined variables for the LLM provider, model, API key, and base URL.

In a Unix environment you can export them directly in the shell before launching the Gateway, or save them to a file .env within the Moltbot configuration directory, which will be read automatically when the service starts.

This approach makes it easy to switch providers or models. Between deployments, there's no need to manually edit JSON or YAML. You simply change the variables and restart the Gateway.

Method 4: Command-line configuration

Another very flexible option is to directly use Moltbot's own configuration commands., with orders of the style moltbot config set to fill in each key interactively or within a script.

This is how you can automate the entire model provider setup (base URL, API key, API type, primary model, authentication, etc.) in a simple shell script. You can even end the script with a gateway reboot and a message prompting you to run the diagnostic utilities.

This method is ideal when you deploy Moltbot on different servers or containers and you want to always apply the same configuration template, only modifying the API key or some specific parameters.

Verify the configuration and diagnose problems

Once the “openai-compatible” provider is configured, it is essential to check that everything is working correctly., both to avoid silly mistakes (misplaced URLs, incomplete keys) and to confirm that the model responds normally.

Moltbot itself incorporates diagnostic commands They can check if the gateway is running, if the provider is correctly defined, if the API key is valid, and if the specified model is available. They can also make a test call to ensure a connection.

You can also send test messages to the model with specific commands and examine API call logs in real time or filter the latest requests. This is especially useful when debugging configuration errors or performance issues.

Among the most common mistakes when integrating an API proxy with Moltbot are:

- Connection refused by a

baseUrlpoorly written or a service down. - 401 Unauthorized by using an incorrect or expired API key.

- 404 Model not found due to a misspelled model identifier.

- 429 Rate limited when the allowed frequency of requests is exceeded.

- 500 Internal error due to temporary supplier issues.

If you need more information, you can start the Gateway in debug mode. You can use a more verbose logging level or activate an environment variable that enables "debug mode." This will show you the details of the requests and responses, which is very useful when something doesn't quite add up.

Supported models and selection according to use case

By working with an intermediate API station, you gain access to a fairly extensive list of models.which includes different families: Claude from Anthropic, GPT from OpenAI, Gemini from Google, and even others like Grok, DeepSeek or LLaMA depending on the provider.

In practice, the same proxy can offer "top" variants and lightweight alternatives. optimized for cost or speed: models such as Claude Opus 4.5 for deep reasoning, Claude Sonnet 4 with a very attractive price-performance ratio, GPT-4o for multimodal capabilities or Gemini 2.0 Flash and Pro with long contexts of up to one million tokens.

Many users end up configuring multiple model routing paths within Moltbotso that the agent uses one model for programming tasks, another for complex reasoning, another for quick responses, and a default one for general use.

Furthermore, it is common to define a strategy for fallback sequential, in which, if the primary model fails or is saturated, the system automatically switches to a backup one (for example, from Claude Sonnet to Claude Opus or to GPT-4o) without you having to intervene.

Using Moltbot on deployment platforms like Zeabur

If you don't want to struggle with servers and manual deploymentsYou can also run Moltbot on PaaS platforms like Zeabur, which offer pre-configured templates to quickly set up the Gateway.

In these templates, the service automatically generates a secure Gateway token and a URL to access the Web UI with the token already embedded, so you just have to open the link to start chatting with the agent and verify that the integration with your AI provider works correctly.

In this type of deployment, environment variables are typically used. as ZEABUR_AI_HUB_API_KEY, ANTHROPIC_API_KEY u OPENAI_API_KEYFrom the provider's control panel, you can also define the Telegram bot token, restart the service, and manually approve the linking of your account from the container terminal.

The choice of default model varies depending on the API key you useIf you use your own AI hub, it might default to "gpt-5-mini" or something similar; if you configure Anthropic directly, the agent might choose Claude Opus as its primary model. In either case, you can always change the preferred model from Moltbot's web UI or its configuration commands.

In this context, it is also important to define persistent volumes. where the configuration and sessions are stored

- Configuration directories, sessions, and credentials (For example

/home/node/.clawdbot). - Working folders and memory files (project routes, indexed data, etc.).

Ultimately, Moltbot is a tool with enormous potential but with significant technical and security requirements.If you're familiar with concepts like remote administration APIs, sandboxing, localhost, reverse proxies, and VPNs, you'll find it relatively easy to keep it under control and get the most out of it. If those terms sound like gibberish to you, it might be best to wait, get some more training, or limit its use to very isolated environments.

The key takeaway is simple: Moltbot can become the perfect ally to automate your digital life If you run it on an isolated machine or container, protect the Gateway with tokens and VPN, carefully configure its model providers using configuration files, variables, or commands, and periodically review its activity and API consumption, only then will you get the benefits of a powerful agent without exposing your data or infrastructure more than necessary.

Editor specialized in technology and internet issues with more than ten years of experience in different digital media. I have worked as an editor and content creator for e-commerce, communication, online marketing and advertising companies. I have also written on economics, finance and other sectors websites. My work is also my passion. Now, through my articles in Tecnobits, I try to explore all the news and new opportunities that the world of technology offers us every day to improve our lives.